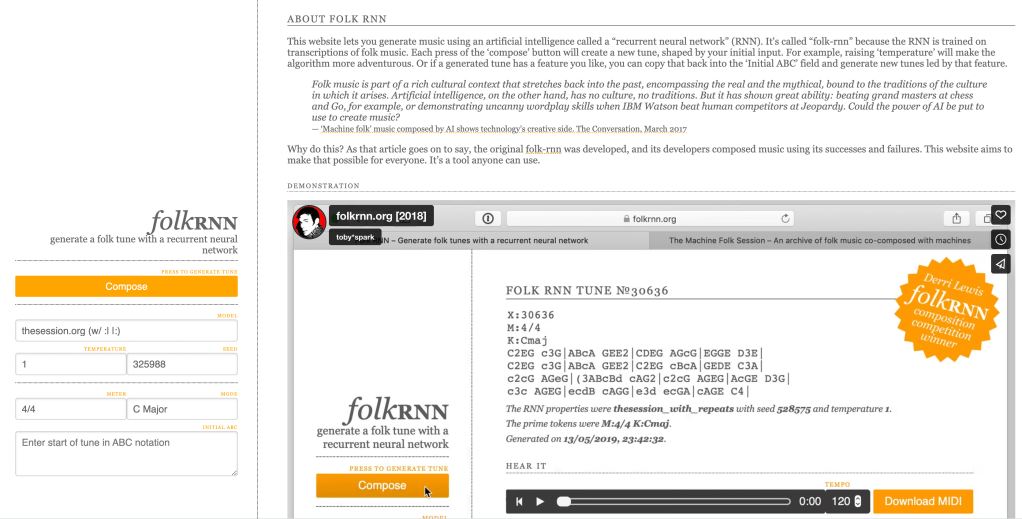

Time for our second report from the MUSAiC festival in Stockholm. This time we’re going to talk about Artificial Intelligence. Our host Bob Sturm of The Royal Institute if Technology (KTH) has been researching whether AI can successfully compose traditional music. You can check out, and more importantly try out, his FolkRNN system online at folkrnn.org. What you’ll find there is a website where you can choose a key and a time signature and then press a button to ask an AI to compose you a new tune in the style of a traditional one. There’s also an intriguing temperature control that lets you turn up the heat—the hotter you go the more FolkRNN diverges from a traditional style to introduce some rather untraditional phrases.

How does it work? FolkRNN employs a kind of Artificial Intelligence called Deep Learning that has become very popular over recent years as a way of training a computer to recognise patterns, perhaps most notably faces in images. Deep Learning uses neural networks to mimic aspects of the human brain. Digital structures called perceptrons model the brain’s neurons and are connected into layered network structures with feedback loops between them, and are then trained to recognise patterns from preselected training data. FolkRNN employs what is called recurrent neural network (hence its name), specifically a technique called long short term memory (or LSTM) networks. For those interested in the gory details, take a look at Bob and team’s paper [1].

Critical to the success of deep learning is finding a good data set for training the neural network. Fortunately there is a ready data set to hand in the form of the thousands of tunes that have been transcribed by traditional musicians on thesession.org, a community website that has grown to be an amazing resource for humans sharing and learning traditional tunes. It also turns out to be a great resource for AI to learn from these tunes and, with some preparation as Bob and team describe in their paper, has been used to create FolkRNN.

FolkRNN has already been used to generate tunes that have been recorded, played in sessions, and featured in competitions of AI generated tunes. My interest, however, is as an accompanist. Improvising accompaniment to traditional tunes is something that I really enjoy in traditional sessions. There is a pleasure to be had in being able to conjure up suitable chords on the fly that fit the tunes, and in then varying voicings, styles and dynamics of playing to introduce variations as they are repeated, mirroring the rolls and cuts and other embellishments introduced by melody players. This can range from tying to bring a fresh feel and exciting to familiar favourites, to trying to improvising along to a tune you have never heard before, predicting where it might twist and turn next. Over the years, I’ve learned some useful tactics for this such as playing drones and modal chords that can sit under nearly any tune the first time around, before introducing more harmonically committed (less ambiguous) chords later on.

I was keen to experience how it would feel accompanying tunes composed by FolkRNN. I begun by generating around thirty tunes over the course of a month, capturing a variety of jigs and reels. I experimented with the temperature setting, alighting on a value of around 1.15 as delivering tunes that I found both interesting but also convincing as traditional tunes. I then downloaded the midi files generated from FolkRNN and uploaded them into Logic where I played around with transposing them into various keys and listening back at different tempos. I selected my favourites and arranged them into one set of reels and one set of jigs. As is common with sets of tunes, each tune repeats more than once (three times is common—once to get it back into your fingers/ears, once to play it, and once to embellish it). In my final sets, several tunes only repeat a couple of times to prevent the whole set getting a bit too long. This business of composing sets also involved some manual editing of the midi files to create smooth musical transitions.

I spent some time playing along to both sets, trying to work out some interesting accompaniment. I experimented with various combinations of Logic’s instruments—violin, flute, accordion and harp—but wasn’t happy with the sounds. In the end, the piano sound was the most musically pleasing so I went with this when though it’s not a regular session instrument (there are of course some marvellous pianists playing traditional music on piano, my favourite being the late Mícheál Ó Súilleabháin). I added a little swing to the playing and cranked up the tempo, at which point the results felt good enough to play along to.

I chose just one set—the set of reels—to perform during my slot at the festival’s Machines and Folk Music panel. I gave them the not especially original placeholder name of the Stockholm Reels (though like all such placeholders, there’s a danger the name will stick). Here’s a quick video of playing along live, on Carolan of course!

So how does it feel as an accompanist?

First, how is it as a composer? Well, personally, I rather like the tunes. To my mind, there’s a Scottish feel to the set overall The first tune in G (I must give them some proper names) has some nice variation on the second part. The triplets in the first part of the second tune give it a more rolling embellished feel, while the second part suggests a neat transition through F#m and G to Em. The third tune is my favourite of the bunch, an unusual three part tune that I hear as moving from E minor, through C major 7, before ending on G major. Finally, an upbeat A major tune to round the set off on a high.

While not part of FolkRNN, it worth reflecting on how the tunes feel when played out as midi files on a virtual piano through Logic. On the one hand, Logic is a rock-solid player, playing quickly and accurately in strict tempo, without mistakes and also without missing a beat whatever I do. This provides me with a solid foundation and safety net for improvising chords and rhythms and trying to add the human element to the performance. On the other hand it is neither dynamic or responsive, failing to respond to my dynamic changes.

Two other aspects sit somewhere between composition and performance. The first is embellishment as noted earlier. A skilled human player will embellish a tune each time it is repeated, adding traditional cuts, rolls and slides and perhaps more contemporary pauses and syncopations. My AI partner does none of this. The second is segueing tunes into sets. This is a key skill for session players. It involves figuring out which tunes fits well musically, but also which fits socially, which requires guessing which tunes other people will know, balancing whether you want them to be surprised and listen versus join in.

So overall, I enjoyed playing along to these new AI generated tunes and am really impressed by FolkRNN. However, there’s still plenty more it could do—embellishing tunes, stringing together sets of tunes, playing dynamically, and also responding to my playing, or even trying to drive me much as a human melody player would.

[1] Sturm, B.L., Santos, J.F., Ben-Tal, O. and Korshunova, I., 2016. Music transcription modelling and composition using deep learning. arXiv preprint arXiv:1604.08723.

Pingback: Week 86: The days are getting longer (folk-rnn v2 + Sturm) – Tunes from the Ai Frontiers

Pingback: 116. Speaker – Carolan Guitar

Pingback: 117. LI-MA – Carolan Guitar

Pingback: 118. Groover – Carolan Guitar

Pingback: 125 Poppy – Carolan Guitar

Pingback: 127. Saorga – Carolan Guitar